Understanding U.S. Stock Market Investments for Korean Investors

Introduction to the U.S. Stock Market The U.S. stock market is a key player in the global financial landscape, offering a multitude of opportunities…

Two Korean companies — Samsung and SK Hynix — produce 90% of the high-bandwidth memory powering NVIDIA's AI revolution. Here's what U.S. investors need to know.

In October 2025, Jensen Huang flew to South Korea and did something unusual for the CEO of the world’s most valuable chip company. He went out for fried chicken.

Photos of the dinner spread across Korean media: Huang, jacket off, personally serving pieces of chicken to Samsung Electronics Vice Chairman Jay Y. Lee. A few months later, the scene repeated in Santa Clara — this time with SK Group Chairman Chey Tae-won at a Korean restaurant called 99 Chicken. Industry watchers gave the ritual a name: fried chicken diplomacy.

It looked casual. It wasn’t. Behind the photos sat one of the most consequential dependencies in modern technology: NVIDIA’s AI empire runs on memory chips that almost nobody outside two Korean companies knows how to make.

If you own NVDA — or any AI-exposed semiconductor stock — you’re really making a bet on Korean memory. Here’s why that matters, and how U.S. investors can play it.

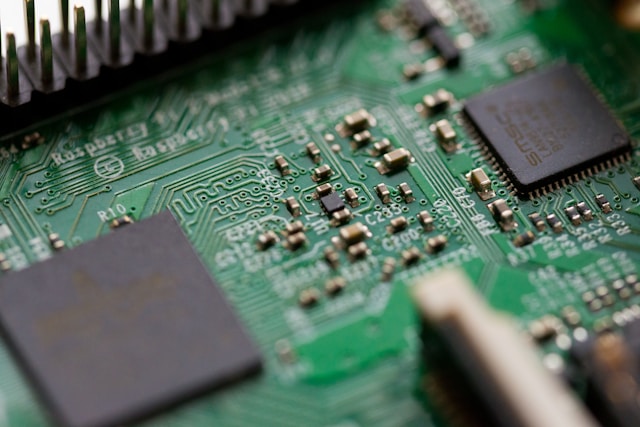

High-bandwidth memory (HBM) is the specialized DRAM that sits next to GPU dies in NVIDIA’s AI accelerators. It’s what lets a Blackwell or Rubin chip move enormous amounts of training data without choking the processor. Without HBM, the rest of the AI stack — the GPUs, the data centers, the trillion-parameter models — doesn’t work.

And HBM production is the most concentrated supply chain in advanced semiconductors. According to Counterpoint Research, three companies make HBM at commercial scale: SK Hynix, Samsung Electronics, and Micron. Two of them are Korean. Together, those two control roughly 90% of global HBM production.

The numbers from late 2025 tell the story. SK Hynix held 57-62% of the global HBM market. Samsung sat at around 17-22%. Micron rounded out the field at 11-21%. Industry trackers report that HBM capacity is fully sold out across all three suppliers through the end of 2026.

HBM total addressable market: $35B in 2025 → $100B projected by 2028, a compound annual growth rate near 40%. NVIDIA reportedly accounts for ~90% of SK Hynix’s HBM supply. Server DRAM prices rose 60-70% in Q1 2026 alone.

For NVIDIA, Korean HBM concentration cuts both ways.

On the downside: it’s a single-point-of-failure risk. If Samsung’s HBM4 yields disappoint, if SK Hynix’s Cheongju fab hits an issue, if a Korean labor dispute slows production, NVIDIA’s product roadmap slips. There is no Plan C at meaningful scale. Micron is improving but still trails on both volume and the specific technical requirements NVIDIA’s latest accelerators demand.

This is why Jensen Huang flies to Seoul. This is why he eats fried chicken with chairmen. NVIDIA’s competitive position depends on relationships that look more like industrial diplomacy than supplier management.

On the upside: it’s also part of NVIDIA’s moat against competitors. Any company that wants to challenge NVIDIA’s AI accelerator dominance — Google’s TPUs, Amazon’s Trainium, custom ASICs from hyperscalers — needs the same HBM supply. And SK Hynix, in particular, has shown a strong preference for NVIDIA. As reported by CNBC in early 2026, SK Hynix had secured over two-thirds of HBM supply orders for NVIDIA’s next-generation Vera Rubin products. That gives Jensen first dibs on the best memory in the world.

The technical evidence backs this up. SK Hynix completed HBM4 development first, finished mass production preparations ahead of schedule, and partnered with TSMC’s 12nm process for the logic base die. That partnership — SK Hynix on the memory, TSMC on the logic, NVIDIA on the GPU — is the most important supply chain in AI right now. None of those three can be easily replaced. Put differently: NVIDIA HBM memory dependence is structural, not cyclical.

For most of the last decade, Samsung was the unchallenged king of Korean memory. That changed in 2025. For the first time in history, SK Hynix outearned Samsung in operating profit — 47.2 trillion won versus 43.6 trillion won. SK Hynix posted a Q1 2026 operating margin of 71.8% — the kind of number you see at Hermès, not at a semiconductor manufacturer running multi-billion-dollar fabs.

SK Hynix’s advantage came almost entirely from one product: HBM. While Samsung wrestled with HBM3E qualification issues at NVIDIA, SK Hynix locked in supply contracts that have it sold out through 2026.

But Samsung isn’t standing still. In late 2025, Samsung CEO Jun Young-hyun told employees that “customers have even stated that ‘Samsung is back.'” By early 2026, Samsung had begun shipping HBM4 chips, and reports indicated the company was close to finalizing a deal to supply over 30% of NVIDIA’s HBM4 memory for 2026. Samsung’s HBM4 reportedly hit 11.7 Gbps in internal evaluations — industry-leading if validated in production. The company’s Pyeongtaek P5 fab is back under construction. The race is genuinely contested again.

We covered the head-to-head investment case in detail in our Samsung vs SK Hynix guide. For this article, the key point is structural: both Korean memory makers are positioned to grow regardless of who wins individual contracts, because total HBM demand is rising faster than either can supply alone.

Now the practical question: how do you actually invest in this story from a U.S. brokerage account? You have three realistic paths, each with different risk-reward profiles.

The most obvious play. If Korean HBM is the chokepoint and NVIDIA controls the demand side, owning NVDA gives you exposure to the entire AI infrastructure buildout. The structural argument is simple: NVIDIA captures most of the value created by the AI compute revolution, and HBM is what makes those chips work.

The catch: NVDA is already priced for substantial growth. The stock has tripled and tripled again since 2023. Position sizing matters. If you’re buying NVDA at current levels, you’re betting that the AI infrastructure spend continues at or above current run rates for several more years. The HBM data supports that thesis — capacity is sold out through 2026, OpenAI’s Stargate alone could absorb 900,000 wafers per month — but valuation always matters.

If you want exposure to the AI memory and semiconductor ecosystem without single-stock concentration, two ETFs do most of the work:

Both ETFs are NVIDIA-heavy (NVDA is typically the largest holding at 15-25% depending on rebalancing). Neither directly holds SK Hynix or Samsung — those are Korean-listed only. So you get the AI memory story largely through Micron and NVIDIA, plus the broader semiconductor cycle exposure.

For investors who want pure exposure to the actual HBM producers, the third option is direct access to the Korean Exchange (KRX). Samsung Electronics (005930.KS) and SK Hynix (000660.KS) trade on KRX, and U.S. brokerages like Interactive Brokers provide direct access.

We walked through the full process — opening an account, FX considerations, ordering on KRX — in our guide to buying Korean stocks from the U.S.. The friction is real (different time zones, currency conversion, slightly higher fees), but for high-conviction investors who want unambiguous HBM exposure, this is the only direct route.

For diversified Korean equity exposure — including memory but also other Korean blue chips — EWY and FLKR remain the simplest one-ticker options. Both contain Samsung and SK Hynix as top holdings. We compared them in detail in our EWY vs FLKR analysis.

NVDA = the direct AI compute bet. SOXX/SMH = the diversified semiconductor cycle bet. SK Hynix/Samsung on KRX = the pure HBM supply-side bet. They’re complementary, not substitutes.

The bull case for AI memory is strong but not bulletproof. A few things would change the picture.

The AI infrastructure boom looks like an American story — NVIDIA chips powering U.S. hyperscaler data centers training U.S. foundation models. Look one layer down at the supply chain, though, and the most concentrated, capacity-constrained, pricing-power-rich part of the stack is Korean. Samsung and SK Hynix don’t just supply NVIDIA. They make NVIDIA’s roadmap possible.

For U.S. investors, the practical takeaway is that AI memory exposure is no longer optional in any serious tech allocation. Whether you express that through NVDA, semiconductor ETFs, or direct Korean memory ownership depends on conviction, time horizon, and risk tolerance. But ignoring the HBM story means missing where the value actually accrues.

Bloomberg Intelligence Senior Technology Analyst Woo Jin Ho breaks down the 2026 AI hardware outlook — including memory supply dynamics and the Korean producers powering NVIDIA’s roadmap. His institutional perspective complements the structural analysis above.

Samsung vs SK Hynix head-to-head, how to access Korean memory stocks from the U.S., and the EWY vs FLKR ETF decision — all in one place.

More Korea Markets →Disclaimer: This article is for informational purposes only and does not constitute financial advice. Always consult a licensed financial advisor before making investment decisions.